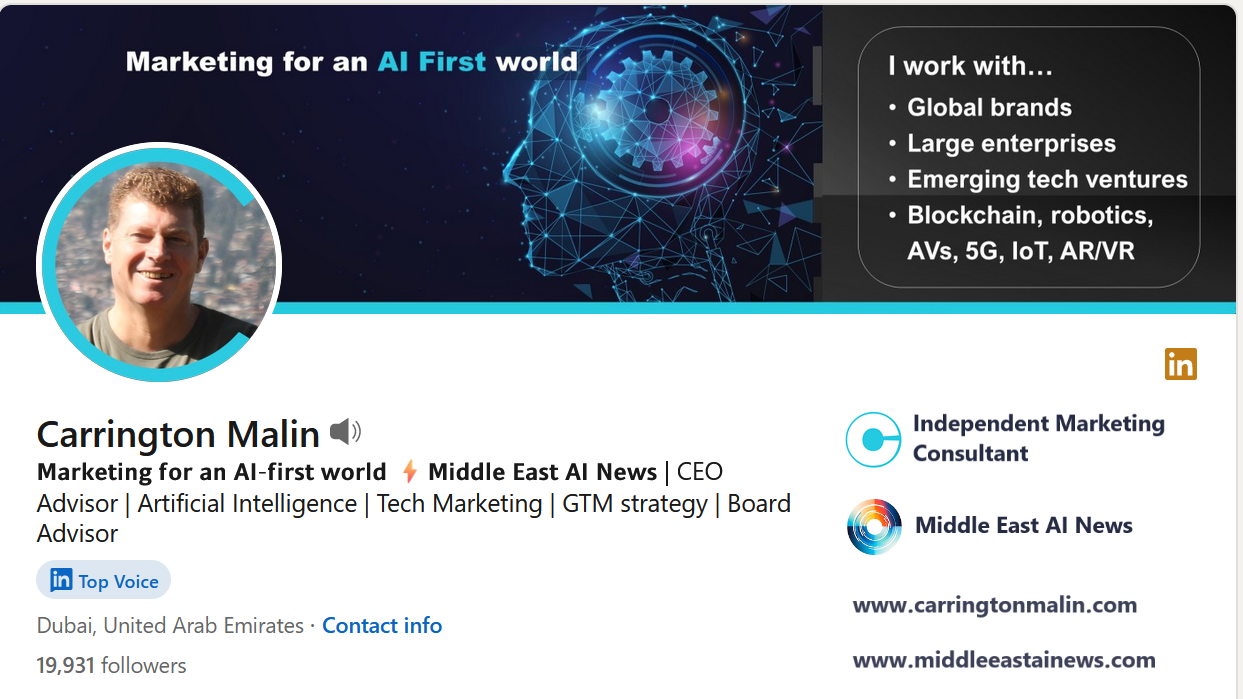

It’s 20 years now since I signed up for LinkedIn, so here are 20 things I’ve learned from the B2B social networking platform.

To mark my 20 years on LinkedIn, here are 20 things I’ve learned from LinkedIn, since I joined the B2B social networking platform on April 6th, 2004. Of course, my experience does extend beyond LinkedIn, and the lessons I’ve learned are also not exclusive to LinkedIn either.

- LinkedIn is hands-down the best social network for B2B. I’ve lost count of the number of customers I’ve signed as a result of LinkedIn outreach, inbound connections, referrals from my connections, let alone paid-for LinkedIn marketing campaigns. Although it’s critical important to respect the people that you target.

- Everyone has their own motivations for using LinkedIn. For some, its simply where they look for their next job. For some, it’s their way of staying up-to-date on business news and trends. Some want to sell. Some want to network. Some don’t actually know!

- Networking is great, but trust wins business and that doesn’t come quickly. I love connecting with interesting people on LinkedIn and I’m happy to try to help people that connect with me. However, any meaningful business involves a commitment on both sides and that requires trust. Just because someone accepts your invite to connect, doesn’t mean that they have any reason to trust you.

- Different people can help you in different ways. A connection’s value to me often has nothing to do with their job title or where they work. Sure, it’s great to be connected with leaders in exciting roles around the world, but these are generally the connections that I have the least frequent contact with. When I’m asking for help, they are often too busy, or simply not online.

- When someone likes your post, it doesn’t mean that they’ve read it! People like LinkedIn posts for different reasons. They could be reciprocating your Likes on their posts, they might have the intention to read it later or it could be just a random impulse to like something whilst scrolling! The people that actually read your posts are the minority.

- Success isn’t related to how successful people say they are. Or the number of likes someone gets! Some company’s seem to develop cultures where liking your boss’s post is something you do, even if you disagree with everything he’s ever done! Likewise, someone who has just achieved a success that others may only dream of, may not even mention it on LinkedIn.

- Opinions are like – well, you know! Everyone is entitled to their opinion. Some like to share it widely, some only share their opinion when prompted by someone else’s content, some seem to bestow it on their followers like it is a rare gift to mankind! I often end up learning things from opinions that I completely disagree with.

- Some people just don’t Like or comment. However, some of them do read. Many times I’ve assumed, wrongly, that someone hasn’t read my article, blog or seen my LinkedIn post. Then, when I mention it to them later, they say “yes, of course, I read it”. That’s actually more valuable to me than a Like.

- LinkedIn’s algorithm favours mass media. I can’t count the number of times, I’ve casually shared a new story from the mass media and seen it get ten, twenty or even thirty times the Likes than when I’ve shared original content that I’ve spent the whole day on. And this doesn’t seem to depend on the quality of the mass media story either!

- Don’t assume that connections actually read your profile! I receive messages virtually every day requesting calls and meetings to discuss potential projects, opportunities or collaboration ideas. Every week at least one of those people has asked for the meeting, because they’ve assumed they know something about me without reading my profile.

- LinkedIn profiles are two-dimensional, people are not. I’ve worked hard on my Linkedin to ensure that it represents me well, appears in the right searches and highlights what I offer to followers, connections and business contacts. However, whilst profiles are a great tool to help you learn more about someone, there’s much that you cannot learn about someone from their LinkedIn profile. Don’t assume too much!

- Your story is your story. Your brand is your brand. Still, it may take you a while to fogure out how to tell your story via LinkedIn. Do seek advice and listen to feedback or suggestions for your LinkedIn activities. However, remember that what works great for someone else on LinkedIn, may not suit you. Don’t adopt someone else’s tactics or content ideas, unless you think they will resonate with your most valuable audiences.

- Automating poorly harms your brand. There are many tools that promise to make your life easier on LinkedIn, notably GenAI content tools, message automation tools and LinkedIn marketing tools themselves. You have to ask yourself, what you would feel when seeing such content or receiving such messages. If you don’t actually know, because you don’t pay that much attention to your own automated content, then you’re likely doing yourself and/or your brand more harm than good.

- Yes, there are fakes and frauds on LinkedIn. As with all social platforms, LinkedIn gets its fair share of fakes and frauds. Sometimes these are easy to spot, sometimes not so easy. A new profile with few details, few connections and a professionally take photo of a pretty woman is one of the easiest fakes to spot! When in doubt, there is always the option to decline the invite to connect, or even delete the connection!

- Connection without conversation is pointless. I always start a conversation with new connections via a brief intro message. I want them to know what my focus is in case there is an opportunity to cooperate with them in the future, and I want to understand what they do for the same reason. At the very least, I’ll be able to go back to that conversation later to remind myself why we connected in the first place.

- Referrals have more value than cold approaches. Any 2nd level LinkedIn connection is connected to one of your existing connections. That means that its possible you could get introduced by one of your existing connections. When you actually have an opportunity to talk to a 2nd level connection about, a referral from one of your 1st level connections will help you build trust faster.

- A little empathy and respect cost you nothing! Everyone is is at a different point in their own journey. LinkedIn has members that are students through to retirees. There are over 200 nationalities on LinkedIn and so many users that dont’ have English as their first language. It’s easy to be dismissive of posts, messages or comments because they don’t fit your own world-view. It’s just as easy to be kind.

- Most people will say nothing. Regardless of whether they agree or disagree, or approve or disapprove, most people won’t comment on your posts. Just like most people won’t comment on that new “visionary leader” title that you’ve just given yourself. Nor tell you how impressed they were by something you shared last week. So, don’t read too much into people’s feedback on LinkedIn, or lack of it.

- Persistence, resilience and balance! Everyone needs to pick their own comfort level with LinkedIn. However, if you expect to get business results from LinkedIn, it pays to be persistent. Big followings and engaged connections can take years to build – this includes periods of time when your efforts may not be rewarded. How much effort you put in, needs to align with your ultimate goals. There’s nothing wrong with just spending an hour on LinkedIn each week, just don’t expect to become a shooting star.

- You reap what you sow. What value you get out of LinkedIn, depends heavily on what you value you put in. Be interesting, people will be interested. Be helpful, people will be helpful in return. Post frequently and even LinkedIn algorithm will be more supportive of your efforts.

I hope that you enjoyed my list of ‘ 20 things I’ve learned from LinkedIn’ . If you haven’t yet found me on LinkedIn, click here.

Read my last article about LinkedIn: